Project

Extremely Personal AI For Physical Health

I watched my father die after 17 years of specialists who never talked to each other. I was 380 pounds, turning 40, and couldn't even get a doctor's appointment. So I built my own AI health system — with memory, lab interpretation, fitness tracking, and persona-based coaching — and lost 95 pounds.

Why

17 years of watching it go wrong.

My father was diagnosed with congestive heart failure at 48. Quadruple bypass surgery. From that point on, he was on a rotating regiment of 30–40 prescription medications for the rest of his life.

He was a pack-a-day smoker even after the bypass. He lied to his doctors about it because he felt like something else was really wrong and they weren't figuring it out. Over the years, he developed neuropathy in his legs, started getting blood clots regularly — one or two roto-rooter procedures a year at first, then three or four a year toward the end. He lost every toe, slowly, one by one. His legs were blackened.

The thing that stuck with me was how the specialists operated in silos. His heart doctor would see a bad number and add a medication to bring it up. His kidney doctor would see a different bad number and add a medication to bring it down. But nobody took a holistic view of his entire body. My assumption — and one I later explored deeply with AI — is that medications improving one organ were quietly degrading another. And that compounded over 17 years.

The Shift

Late 2024. Something changed.

Around the end of 2024, something shifted with my father. He caught a cold and it took him out for almost six weeks. At the same time, I made an internal decision: I was turning 40 in 2025, I was 380 pounds, and I was not going to follow this path.

I'd worked on my weight over the previous five years — went from 425 down to 350, back up to 380. Clearly whatever I was doing wasn't enough. I needed to try something different.

New Mexico has terrible healthcare access. I'd tried to work with a PCP to get on semaglutide, but it was 8–10 months to get an appointment, lab results would get lost — it just wasn't working in a life with this many priorities. So I turned to LLMs. I asked one to research compounded semaglutide options, ran it through the "Is It Stupid?" check, got the expected 20 disclaimers about not being a doctor, and made my own call. I understand how systems work. The body is another system. I was willing to trust my own understanding with the information I was reading.

February 2025, I started on compounded semaglutide. The first few months were weird — nausea, and then I started to understand what "food noise" was. I could eat very little and feel completely satisfied. Nothing ever sounded "good." I lost 10–20 pounds over three months.

The Holistic View

AI saw what the specialists didn't.

Around the same time, I got access to my father's lab results. I started feeding them through LLMs to understand what the measurements meant and what they showed over time.

The conclusion was stark: his heart, liver, and kidneys were all functioning at roughly 20% of normal. You could trace the decline in the history — a slow, steady slide across all three. None of his doctors were telling him this. The AI looked at everything together and said what no individual specialist had: this is end-stage. Without transplants of multiple organs, he doesn't have much time left.

My father had rebound periods where he'd seem better for a few weeks, then he'd go downhill and sleep for days. It was hard to reconcile what the AI was saying with those good stretches. But sure enough, it happened exactly as predicted. One day he couldn't stand. The doctors said there was nothing more they could do without amputating. He didn't want that. They sent him home on hospice, and three weeks later, he was gone.

The AI was accurate on timeline, on what was happening, and on how things would go. I was skeptical the entire time. I watched it happen in real time.

The Wake-Up Call

Two stomach bugs and a diagnosis.

In May 2025 — before my father passed — I caught a stomach bug. Terrible, not fun. A few days later I felt decent, and then a second one hit. That seemed abnormal, so I went to urgent care. They sent me to the ER for tests they couldn't do. The ER did an ultrasound and found I had NAFLD — non-alcoholic fatty liver disease. The stomach bugs were unrelated. I just got lucky they looked.

My PCP was still booked out 10 months. So I went and got full lab work done out of pocket and fed everything through an LLM. The picture: borderline pre-diabetic, liver enzymes off, testosterone getting dangerously low. The AI connected the dots — this is the same metabolic path your father was on. This is where it starts.

That was the moment I decided to build something real.

The System

An AI that actually knows me.

The memory model was inspired by 50 First Dates. Drew Barrymore's character wakes up every morning with no memory of the day before, so she watches a video tape to catch up on her life. That's how I thought about long-term AI memory — the AI instructs itself to save memories worth keeping via tool calls into a MySQL database. Every conversation, the relevant memories get injected into the system prompt based on which tools are active for that persona. It starts each session knowing exactly where we left off.

The key design decision was personas. Each persona is a database record — a system prompt that defines its personality, and a set of tool checkboxes that control what it can access. Want a new persona? Write a system prompt, check the boxes for which tools it should have, done. Each user gets their own set of personas, their own memories, their own data.

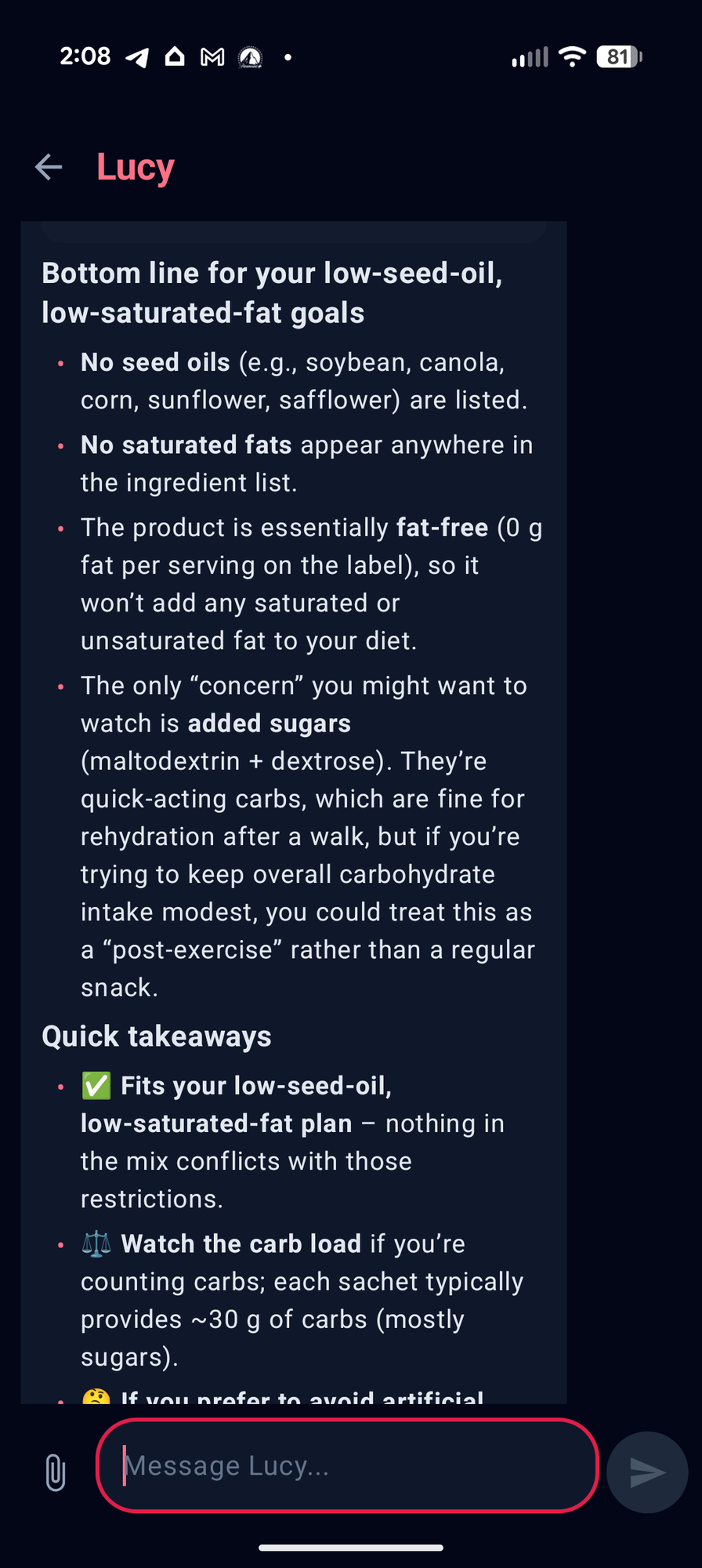

- Lucy — Nutritionist / Exercise Coach— named after the Barrymore character. I tell her what I ate in approximate proportions, she has tool calls that insert food logs into the database with macro categories and a summary of each meal. I tell her what movement I did, she rates my day. I can snap a picture of a nutrition label and ask "is this worth eating?" — she knows my dietary goals and evaluates against them.

- Daily Score— every day gets a rating out of 10. 8–10 means I'm going in the right direction. 5–7 means I'm maintaining. Under 5 means I'm going the wrong way. The goal is simple: stay above 5 every day, push for 7+ to keep leaning in the right direction.

- Lab Interpreter— when I get bloodwork done, I feed it in. It has tool calls to query my lab history, compare against previous results, track trends, and tell me what's improving and what needs attention — the holistic view my father never got.

Lucy evaluating a product against my low-seed-oil, low-saturated-fat goals

V1

Next.js, one model, simple memory.

The first version was a Next.js app hitting DeepInfra as an inference provider, running OpenAI OSS 120B. Memory was a MySQL table — the AI's system prompt simply said "if you think there's a memory worth saving, save it using this tool." Personas were defined in another table: a system prompt and a set of available tools. That core architecture — personas as database records with configurable tool access — has survived every version since.

V2 — Current

Android app, real backend, multiple models.

The Next.js web app still exists for desktop use, but 90% of the time we're on the Android app now — written in Kotlin. It just makes more sense as something you log from the couch, or when you're snapping a picture of a label at the grocery store. Both are gated behind authentication with 2FA — this is extremely personal data.

Both hit an Express.js backend API running on a Digital Ocean droplet. This solved a real problem — AI "thinking" and longer responses would exceed Vercel's 30-second timeout on the free tier. The backend handles all the DeepInfra calls directly with no timeout ceiling.

Users can select which model to use per persona. Most conversations run on either Kimi K2.5 or OpenAI OSS 120B Turbo. Image analysis — like nutrition label scanning — uses Gemma 3 because it's fast and cheap for that specific job.

Health Connect data comes in through a daily cron job that polls the API and caches it in the database. Food logs, exercise logs, and health metrics are all accessed through tool calls — they're not stuffed into the system prompt. Lucy has tools that insert food logs with macro breakdowns and meal summaries. She has tools to query exercise history. She has tools to pull Fitbit data — resting heart rate, steps, sleep, activity. Each persona only sees the data its tools allow.

V3 — Under Development

Menu planning and household management.

This thing is turning into a full household nutrition manager, which is sick. The next version adds recipe knowledge and shopping list generation.

We teach Lucy a recipe we cook — ingredients, proportions, serving sizes — and she knows exactly what the macros are per serving. We do a lot of meal prep now, and multiple meal prep recipes for the week are already in the system. She knows exactly how much chicken, rice, beans, whatever we need for a full week of lunches for multiple people.

The goal: my wife can tell Lucy "Monday we're doing chili, Tuesday we're doing burgers, meal prep — Tony's making margarita chicken for himself and Erak, I'm doing turkey and beans" — and Lucy knows which store we buy each ingredient from, knows what order we shop those stores in, and generates separate shopping lists for Costco and Smith's, ordered by aisle. We mark off anything we already have and go.

Results

The numbers don't lie.

380 to 285. Resting heart rate from 77 to 65. Labs suggest the NAFLD has likely reversed, though I'd need an ultrasound to confirm. The AI can see it all in the Health Connect data — walks are the right intensity, I'm operating more efficiently across the board. About a year after that stomach bug, I feel and look better than I have in my entire adult life — and I'm still going, every day, with the help of Lucy.

Yes, I'm accepting the risk that AI might be wrong. But I still haven't been able to get in to see my PCP. If I'd waited for that, I'd still be going in the wrong direction.

Family

It's not just me anymore.

My wife saw what was happening and wanted in. She has her own profile on the system now — her own AI personas, her own metrics, her own history. She loves looking at her spreadsheet of progress.

My 13-year-old son recently joined too. It's turned into a family thing — a shared motivator to live healthier, longer lives. That matters more to me than any technical achievement on this list.

Stack

What's under the hood.

- Next.js — web frontend, authenticated with 2FA

- Kotlin — Android companion app, Health Connect integration

- Express.js — backend API on Digital Ocean, handles inference calls and tool execution

- DeepInfra — inference provider, multi-model (Kimi K2.5, OpenAI OSS 120B Turbo, Gemma 3 for vision)

- MySQL — personas, memories, food logs, exercise logs, lab results, Health Connect cache

- Google Health Connect API — daily polling for Fitbit data (heart rate, steps, sleep, activity)

Want One?

Your health data deserves better than a generic app.

If you want a personal AI health system that actually knows you — your labs, your history, your daily habits, your goals — I've built the framework. Custom AI health assistants, fitness tracking integration, lab interpretation, persona-based coaching. This isn't a SaaS product with 10,000 users and generic advice. It's software built for one person at a time.

Get in touch and let's talk about what you need.